Why choose Atomic AMZ?

Kindly watch the video below

“I have worked with numerous agencies over the last 10 years trying to grow our Amazon channel, we usually ended up bringing it back in-house. It's refreshing to find someone truly obsessed with Amazon growth and willing to do what it takes"

Jaden Gong, Dreame Operations

AI Discovery + Killer Listing + PPC = $$$’s

Our Playbook for Amazon Domination

Scaling on Amazon isn't about spending more—it's about fixing what's broken first. We connect listings, creative, catalog structure, and PPC into one system, optimized forhow Amazon's AI actually works in 2026

This is how established brands dominate discovery and scale profitably

ALEXA AI OPTIMIZATION

We rebuild your Amazon presence for how Alexa AI actually evaluates products in 2026. Most sellers still optimize for keyword search—Amazon changed the rules. We fix your listings, creative, and catalog structure based on how Amazon's AI systems work, not guess work.

Why our Alexa approach works:

1. Catalog restructuring using Amazon's structured data architecture.

2. Content aligned with semantic similarity algorithms.

3. Weekly discovery performance and ranking analysis.

4. Built on Amazon's research papers, not generic tactics.

This isn't surface-level optimization—it's a complete rebuild for the AI era.

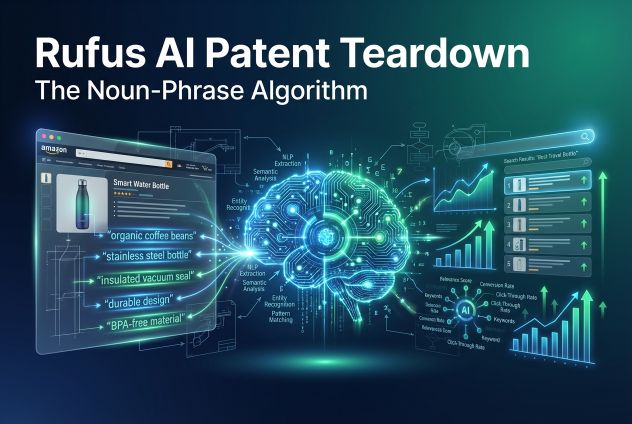

LISTING COPYWRITING

We write product listings that convert browsers into buyers. Your current copy was built for keyword stuffing—Rufus doesn't care about that anymore. We create listings optimized for Amazon's AI discovery while maximizing conversion through benefit-driven copy, strategic feature callouts, and persuasive messaging that addresses realcustomer objections.

What makes our listings convert:

1. AI-optimized copy that gets discovered and converts traffic.

2. Benefit-focused messaging built on customer research and competitor analysis.

3. Strategic keyword integration without keyword stuffing.

4. A+ Content and Brand Story optimization.

Most agencies write generic product descriptions—we write conversion engines

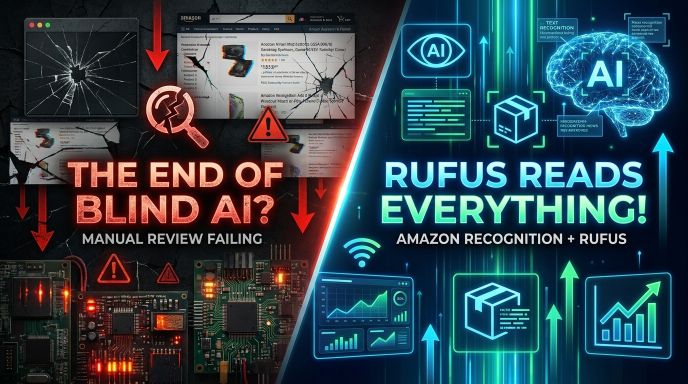

PRODUCT IMAGES & A+ CONTENT

We create high-converting product images and A+ Content optimized for Amazon's visual AI systems. Your images aren't just pretty pictures—they're conversion tools that need to work with Amazon Rekognition while persuading customers to buy. We design infographics, lifestyle shots, and A+ layouts that maximize click-through rates andconversions.

What makes our creative convert:

1. Images optimized for Amazon's visual AI recognition systems

2. Benefit-driven infographics that address customer objections

3. A+ Content layouts designed for mobile and desktop conversion

4. Strategic visual hierarchy that guides buyers to purchase

Pretty pictures don't sell—strategic creative does.

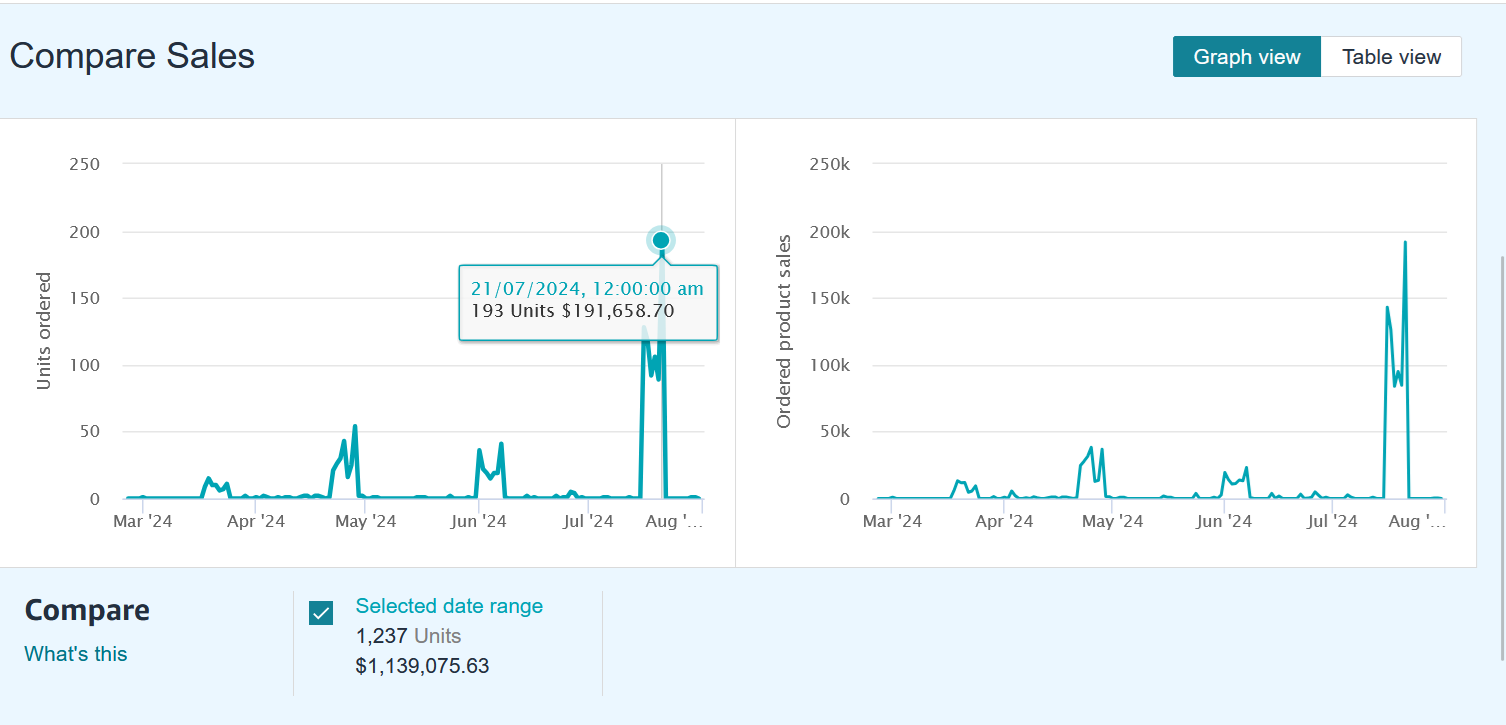

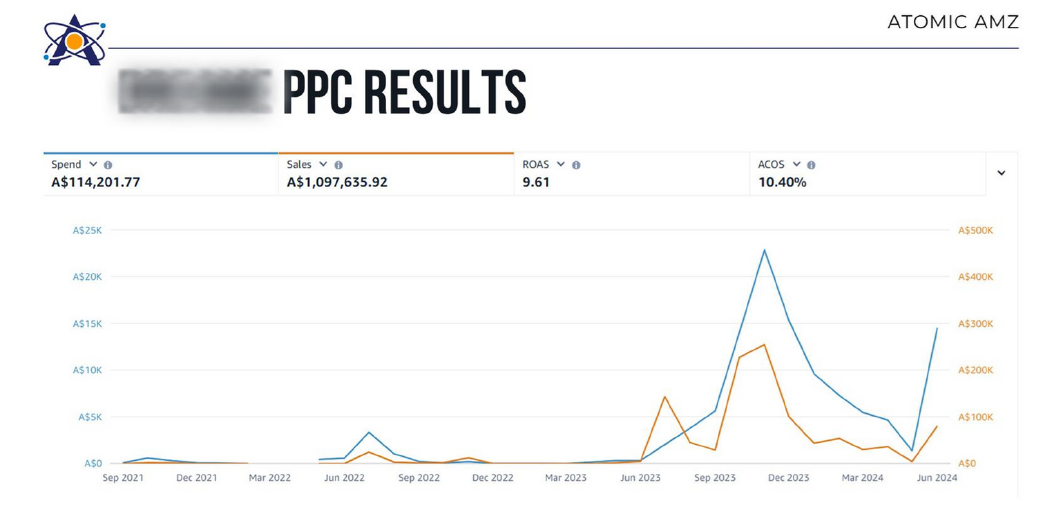

AMAZON PPC

We run Amazon advertising campaigns that scale profitably, not burn budgets. Mostagencies jump straight to PPC without fixing broken listings—we optimize your foundation first, then launch ads that actually convert. We manage Sponsored Products, Sponsored Brands, and Sponsored Display with data-driven bidding strategies and continuous optimization cycles.

How we turn ad spend into profit:

1. Foundation-first approach: fix listings before scaling ad spend

2. Smart bidding strategies optimized for ROAS and profitability

3. Weekly campaign optimization and negative keyword management

4. Performance tracking tied to actual business outcomes, not vanity metrics

Most agencies optimize for clicks—we optimize for profitable growth.

FULL ACCOUNT MANAGEMENT

We handle everything. Rufus AI optimization, listing rewrites, creative production, catalog restructuring, and PPC management—all coordinated into one growth system. No more juggling multiple agencies or trying to connect the dots yourself. We rebuild your entire Amazon presence for the AI era and scale it profitably with ongoing optimization and strategic execution.

What full account management includes:

1. Complete Amazon optimization: listings, creative, catalog, and advertising

2. Dedicated account team executing weekly optimization cycles

3. Strategic planning aligned with your business goals and inventory

4. Monthly reporting with actionable insights and growth recommendations

It's a complete Amazon growth partnership.

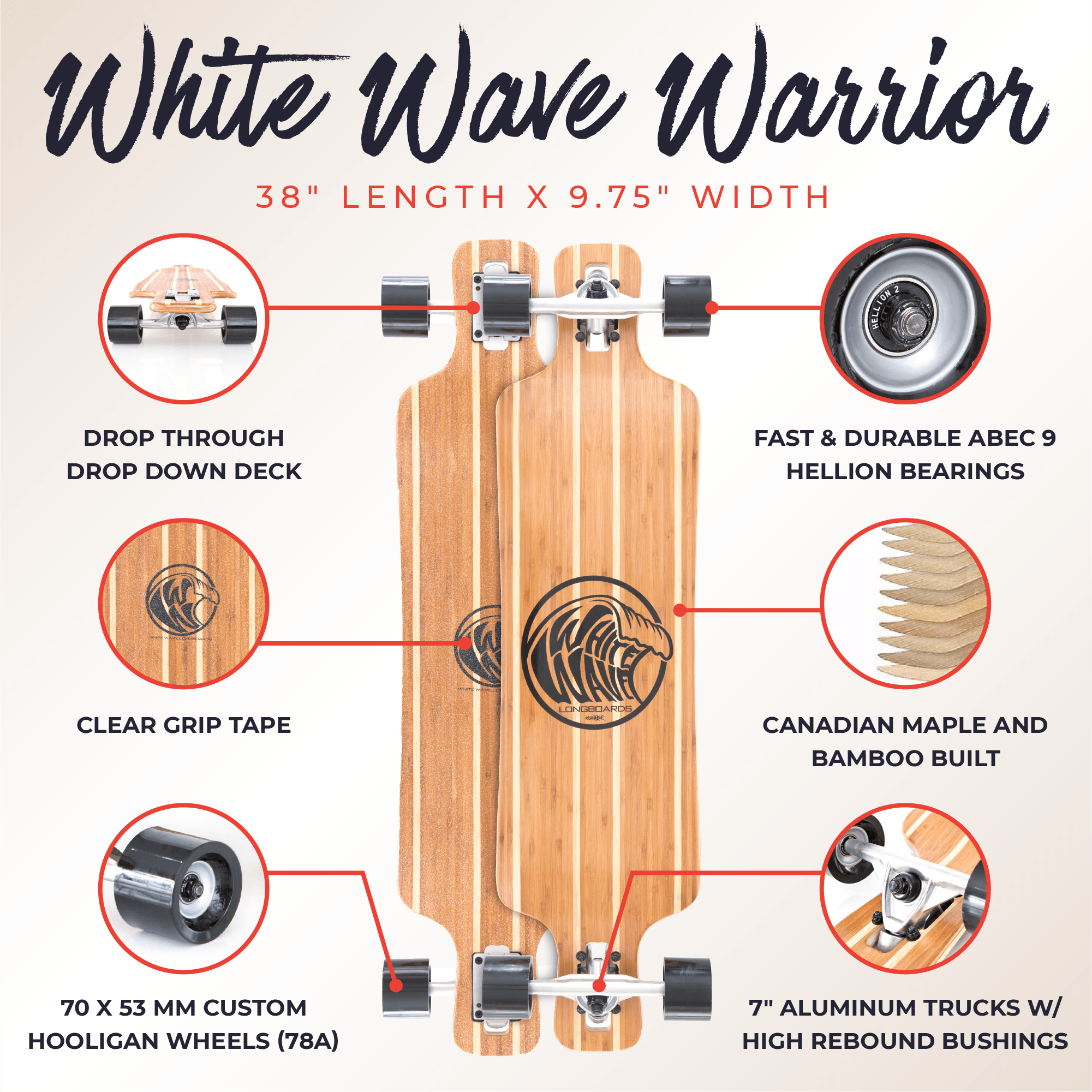

"Peter and his team consistently deliver outstanding results on Amazon for White Wave. I trust the Atomic AMZ team because they always execute on what they promise"

Josh Ostler, Founder

"Peter and his team consistently deliver out standing results on Amazon for White Wave. I trust the Atomic AMZ team because they always execute on what they promise.”

Josh Ostler,

Founder

"We’ve been working with Peter and the team at Atomic AMZ for over a year now and it’s made a real difference to our Amazon business. We have full confidence in their strategy and support."

Jackie Ly

70 mai

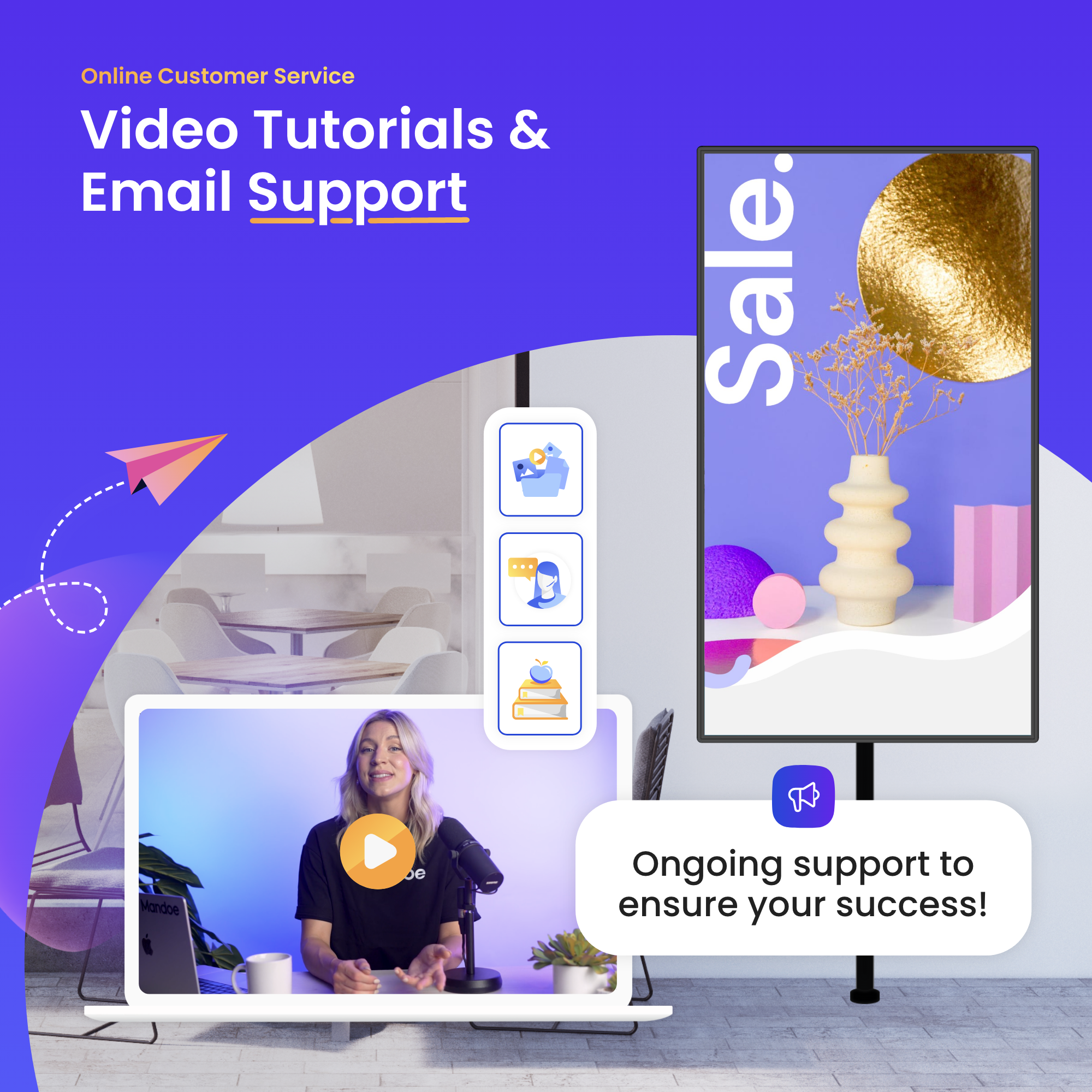

"Working with Peter helped us bring much more structure to our Amazon strategy, from listings through to PPC. His insights have made a real impact on how we approach the channel and continue to grow sales."

Vanessa Rizzo

Mandoe Media

Why partner with Atomic AMZ?

We Get Results Period.

Top 1% Amazon Seller

10+ years in the trenches

No BS. Just Results

Fix first, then scale

Real Practitioners

We run our own brands

Rufus-obsessed

Built on Amazon's research

Elite Team

Not a generic agency

The #1 lever to Amazon discovery. AI Alexa not

Every listing, image, and A+ Content module is rebuilt for how Alexa AI discovers and ranks products. Our content wins by aligning Amazon's semantic algorithms with consumer psychology and market positioning

Expertise

Backed by Years of Results

Plenty of agencies make noise. Few have the receipts.

We've spent 10+ years testing, refining, and executing Amazon strategies that actually work, as both a top 1% seller and an Amazon SPN Partner. Our approach is shaped by real data, pressure-tested through our own brands, and constantly evolving with Amazon's AI systems.

Peter Nobbs

CEO & FOUNDER

Top 1% Amazon seller. Built, scaled, and exited multiple 7-figure Amazon brands. Over 10 years on the platform. Amazon SPN Partner.

To Amazon Sellers

Most agencies talk about Amazon. I've lived it.

I moved to New York with my girlfriend (now wife). She got morning sickness from the smell of products stored under our bed. That's when I discovered Amazon FBA.

I scaled those products to multiple seven figures and sold to a private equity group.

That grind taught me something most Amazon agencies will never understand: watching your own ad spend drain with zero sales creates a different kind of desperation.

I don't manage accounts from theory. I manage them from operator experience.

I built Atomic AMZ for established brands who want Amazon growth without the guess work. No junior reps. No long contracts. Direct execution backed by Amazon's actual research.

I take on 12 clients per year and work directly on every account.

If you're a real business ready to dominate Amazon discovery in 2026, let's talk.

"Atomic AMZ helped us launch our Amazon business from early traction to major category leader. We couldn't have achieved this growth without their team. Looking forward to the next stage of scaling"

Alberto, Director of World Maps

Brands I worked with over the years

Atomic AMZ Insights

Short & actionable insights from the Amazon trenches

Peter Nobbs

March 17, 2026 • 5 min read

Peter Nobbs

March 12, 2026 • 4 min read

Peter Nobbs

March 7, 2026 • 5 min read

Peter Nobbs

March 1, 2026 • 1 min read